I remember it well. As a young researcher I presented my findings in one of my first talks, at the end of which the chair killed my work with a remark, that made the whole room of scientists laugh, but was really beside the point. My supervisor, a truly original and very wise scientist, suppressed his anger. Afterwards, he said: “it is very easy ridiculing something that isn’t a mainstream thought. It’s the argument that counts. We will prove that we are right.” …And we did.

I remember it well. As a young researcher I presented my findings in one of my first talks, at the end of which the chair killed my work with a remark, that made the whole room of scientists laugh, but was really beside the point. My supervisor, a truly original and very wise scientist, suppressed his anger. Afterwards, he said: “it is very easy ridiculing something that isn’t a mainstream thought. It’s the argument that counts. We will prove that we are right.” …And we did.

This was not my only encounter with scientists who try to win the debate by making fun of a theory, a finding or …people. But it is not only the witty scientist who is to *blame*, it is also the uncritical audience that just swallows it.

I have similar feelings with some journal articles or blog posts that try to ridicule EBM – or any other theory or approach. Funny, perhaps, but often misunderstood and misused by “the audience”.

Take for instance the well known spoof article in the BMJ:

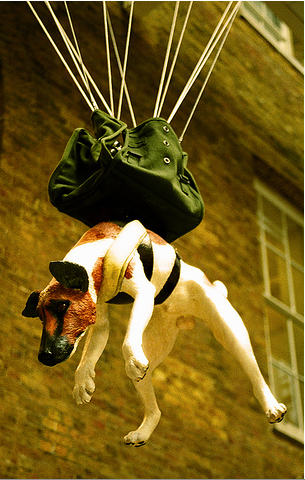

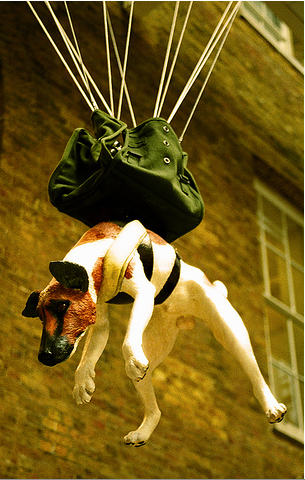

“Parachute use to prevent death and major trauma related to gravitational challenge: systematic review of randomised controlled trials”

It is one of those Christmas spoof articles in the BMJ, meant to inject some medical humor into the normally serious scientific literature. The spoof parachute article pretends to be a Systematic Review of RCT’s investigating if parachutes can prevent death and major trauma. Of course, no such trial has been done or will be done: dropping people at random with and without a parachute to proof that you better jump out of a plane with a parachute.

It is one of those Christmas spoof articles in the BMJ, meant to inject some medical humor into the normally serious scientific literature. The spoof parachute article pretends to be a Systematic Review of RCT’s investigating if parachutes can prevent death and major trauma. Of course, no such trial has been done or will be done: dropping people at random with and without a parachute to proof that you better jump out of a plane with a parachute.

I found the article only mildly amusing. It is so unrealistic, that it becomes absurd. Not that I don’t enjoy absurdities at times, but absurdities should not assume a live of their own. In this way it doesn’t evoke a true discussion, but only worsens the prejudice some people already have.

People keep referring to this 2003 article. Last Friday, Dr. Val (with whom I mostly agree) devoted a Friday Funny post to it at Get Better Health: “The Friday Funny: Why Evidence-Based Medicine Is Not The Whole Story”.* In 2008 the paper was also discussed by Not Totally Rad [3]. That EBM is not the whole story seems pretty obvious to me. It was never meant to be…

But lets get specific. Which assumptions about RCT’s and SR’s are wrong, twisted or put out of context? Please read the excellent comments below the article. These often put the finger on the spot.

1. EBM is cookbook medicine.

Many define EBM as “make clinical decisions based on a synthesis of the best available evidence about a treatment.” (i.e. [3]). However, EBM is not cookbook medicine.

The accepted definition of EBM is “the conscientious, explicit and judicious use of current best evidence in making decisions about the care of individual patients” [4]. Sacket already emphasized back in 1996:

Good doctors use both individual clinical expertise and the best available external evidence, and neither alone is enough. Without clinical expertise, practice risks becoming tyrannised by evidence, for even excellent external evidence may be inapplicable to or inappropriate for an individual patient. Without current best evidence, practice risks becoming rapidly out of date, to the detriment of patients.

2. RCT’s are required for evidence.

Although a well performed RCT provides the “best” evidence, RCT’s are often not appropriate or indicated. That is especially true for domains other than therapy. In case of prognostic questions the most appropriate study design is usually an inception cohort. A RCT for instance can’t tell whether female age is a prognostic factor for clinical pregnancy rates following IVF: there is no way to randomize for “age”, or for “BMI”. 😉

The same is true for etiologic or harm questions. In theory, the “best” answer is obtained by RCT. However RCT’s are often unethical or unnecessary. RCT’s are out of the question to address whether substance X causes cancer. Observational studies will do. Sometimes cases provide sufficient evidence. If a woman gets hepatic veno-occlusive disease after drinking loads of a herbal tea the finding of similar cases in the literature may be sufficient to conclude that the herbal tea probably caused the disease.

Diagnostic accuracy studies also require another study design (cross-sectional study, or cohort).

But even in the case of interventions, we can settle for less than a RCT. Evidence is not present or not, but exists on a hierarchy. RCT’s (if well performed) are the most robust, but if not available we have to rely on “lower” evidence.

BMJ Clinical Evidence even made a list of clinical questions unlikely to be answered by RCT’s. In this case Clinical Evidence searches and includes the best appropriate form of evidence.

- where there are good reasons to think the intervention is not likely to be beneficial or is likely to be harmful;

- where the outcome is very rare (e.g. a 1/10000 fatal adverse reaction);

- where the condition is very rare;

- where very long follow up is required (e.g. does drinking milk in adolescence prevent fractures in old age?);

- where the evidence of benefit from observational studies is overwhelming (e.g. oxygen for acute asthma attacks);

- when applying the evidence to real clinical situations (external validity);

- where current practice is very resistant to change and/or patients would not be willing to take the control or active treatment;

- where the unit of randomisation would have to be too large (e.g. a nationwide public health campaign); and

- where the condition is acute and requires immediate treatment.

Of these, only the first case is categorical. For the rest the cut off point when an RCT is not appropriate is not precisely defined.

Informed health decisions should be based on good science rather than EBM (alone).

Dr Val [2]: “EBM has been an over-reliance on “methodolatry” – resulting in conclusions made without consideration of prior probability, laws of physics, or plain common sense. (….) Which is why Steve Novella and the Science Based Medicine team have proposed that our quest for reliable information (upon which to make informed health decisions) should be based on good science rather than EBM alone.

Methodolatry is the profane worship of the randomized clinical trial as the only valid method of investigation. This is disproved in the previous sections.

The name “Science Based Medicine” suggests that it is opposed to “Evidence Based Medicine”. At their blog David Gorski explains: “We at SBM believe that medicine based on science is the best medicine and tirelessly promote science-based medicine through discussion of the role of science and medicine.”

While this may apply to a certain extent to quack or homeopathy (the focus of SBM) there are many examples of the opposite: that science or common sense led to interventions that were ineffective or even damaging, including:

As a matter of fact many side-effects are not foreseen and few in vitro or animal experiments have led to successful new treatments.

At the end it is most relevant to the patient that “it works” (and the benefits outweigh the harms).

Furthermore EBM is not -or should not be- without consideration of prior probability, laws of physics, or plain common sense. To me SBM and EBM are not mutually exclusive.

Why the example is bullshit unfair and unrealistic

I’ll leave it to the following comments (and yes the choice is biased) [1]

Nibu A George,Scientist :

First of all generalizing such reports of some selected cases and making it a universal truth is unhealthy and challenging the entire scientific community. Secondly, the comparing the parachute scenario with a pure medical situation is unacceptable since the parachute jump is rather a physical situation and it become a medical situation only if the jump caused any physical harm to the person involved.

Richard A. Davidson, MD,MPH:

This weak attempt at humor unfortunately reinforces one of the major negative stereotypes about EBM….that RCT’s are required for evidence, and that observational studies are worthless. If only 10% of the therapies that are paraded in front of us by journals were as effective as parachutes, we would have much less need for EBM. The efficacy of most of our current therapies are only mildly successful. In fact, many therapies can provide only a 25% or less therapeutic improvement. If parachutes were that effective, nobody would use them.

While it’s easy enough to just chalk this one up to the cliche of the cantankerous British clinician, it shows a tremendous lack of insight about what EBM is and does. Even worse, it’s just not funny.

Aviel Roy-Shapira, Senior Staff Surgeon

Smith and Pell succeeded in amusing me, but I think their spoof reflects a common misconception about evidence based medicine. All too many practitioners equate EBM with randomized controlled trials, and metaanalyses.

EBM is about what is accepted as evidence, not about how the evidence is obtained. For example, an RCT which shows that a given drug lowers blood pressure in patients with mild hypertension, however well designed and executed, is not acceptable as a basis for treatment decisions. One has to show that the drug actually lowers the incidence of strokes and heart attacks.

RCT’s are needed only when the outcome is not obvious. If most people who fall from airplanes without a parachute die, this is good enough. There is plenty of evidence for that.

EBM is about using outcome data for making therapeutic decisions. That data can come from RCTs but also from observation

Lee A. Green, Associate Professor

EBM is not RCTs. That’s probably worth repeating several times, because so often both EBM’s detractors and some of its advocates just don’t get it. Evidence is not binary, present or not, but exists on a heirarchy (Guyatt & Rennie, 2001). (….)

The methods and rigor of EBM are nothing more or less than ways of correcting for our imperfect perceptions of our experiences. We prefer, cognitively, to perceive causal connections. We even perceive such connections where they do not exist, and we do so reliably and reproducibly under well-known sets of circumstances. RCTs aren’t holy writ, they’re simply a tool for filtering out our natural human biases in judgment and causal attribution. Whether it’s necessary to use that tool depends upon the likelihood of such bias occurring.

Scott D Ramsey, Associate Professor

Parachutes may be a no-brainer, but this article is brainless.

Unfortunately, there are few if any parallels to parachutes in health care. The danger with this type of article is that it can lead to labeling certain medical technologies as “parachutes” when in fact they are not. I’ve already seen this exact analogy used for a recent medical technology (lung volume reduction surgery for severe emphysema). In uncontrolled studies, it quite literally looked like everyone who didn’t die got better. When a high quality randomized controlled trial was done, the treatment turned out to have significant morbidity and mortality and a much more modest benefit than was originally hypothesized.

Timothy R. Church, Professor

On one level, this is a funny article. I chuckled when I first read it. On reflection, however, I thought “Well, maybe not,” because a lot of people have died based on physicians’ arrogance about their ability to judge the efficacy of a treatment based on theory and uncontrolled observation.

Several high profile medical procedures that were “obviously” effective have been shown by randomized trials to be (oops) killing people when compared to placebo. For starters to a long list of such failed therapies, look at antiarrhythmics for post-MI arrhythmias, prophylaxis for T. gondii in HIV infection, and endarterectomy for carotid stenosis; all were proven to be harmful rather than helpful in randomized  trials, and in the face of widespread opposition to even testing them against no treatment. In theory they “had to work.” But didn’t.

trials, and in the face of widespread opposition to even testing them against no treatment. In theory they “had to work.” But didn’t.

But what the heck, let’s play along. Suppose we had never seen a parachute before. Someone proposes one and we agree it’s a good idea, but how to test it out? Human trials sound good. But what’s the question? It is not, as the author would have you believe, whether to jump out of the plane without a parachute or with one, but rather stay in the plane or jump with a parachute. No one was voluntarily jumping out of planes prior to the invention of the parachute, so it wasn’t to prevent a health threat, but rather to facilitate a rapid exit from a nonviable plane.

Another weakness in this straw-man argument is that the physics of the parachute are clear and experimentally verifiable without involving humans, but I don’t think the authors would ever suggest that human physiology and pathology in the face of medication, radiation, or surgical intervention is ever quite as clear and predictable, or that non-human experience (whether observational or experimental) would ever suffice.

The author offers as an alternative to evidence-based methods the “common sense” method, which is really the “trust me, I’m a doctor” method. That’s not worked out so well in many high profile cases (see above, plus note the recent finding that expensive, profitable angioplasty and coronary artery by-pass grafts are no better than simple medical treatment of arteriosclerosis). And these are just the ones for which careful scientists have been able to do randomized trials. Most of our accepted therapies never have been subjected to such scrutiny, but it is breathtaking how frequently such scrutiny reveals problems.

Thanks, but I’ll stick with scientifically proven remedies.

parachute experiments without humans

* on the same day as I posted Friday Foolery #15: The Man who pioneered the RCT. What a coincidence.

** Don’t forget to read the comments to the article. They are often excellent.

Photo Credits

References

- Smith, G. (2003). Parachute use to prevent death and major trauma related to gravitational challenge: systematic review of randomised controlled trials BMJ, 327 (7429), 1459-1461 DOI: 10.1136/bmj.327.7429.1459

- The Friday Funny: Why Evidence-Based Medicine Is Not The Whole Story”. (getbetterhealth.com) [2010.01.29]

- Call for randomized clinical trials of Parachutes (nottotallyrad.blogspot.com) [08-2008]

- Sackett DL, Rosenberg WM, Gray JA, Haynes RB, & Richardson WS (1996). Evidence based medicine: what it is and what it isn’t. BMJ (Clinical research ed.), 312 (7023), 71-2 PMID: 8555924

are very well edged off

to gather more details from

to gather more details from

![Reblog this post [with Zemanta]](https://i0.wp.com/img.zemanta.com/reblog_b.png)

Recent Comments