For many of today’s busy practicing clinicians, keeping up with the enormous and ever growing amount of medical information, poses substantial challenges [6]. Its impractical to do a PubMed search to answer each clinical question and then synthesize and appraise the evidence. Simply, because busy health care providers have limited time and many questions per day.

For many of today’s busy practicing clinicians, keeping up with the enormous and ever growing amount of medical information, poses substantial challenges [6]. Its impractical to do a PubMed search to answer each clinical question and then synthesize and appraise the evidence. Simply, because busy health care providers have limited time and many questions per day.

As repeatedly mentioned on this blog ([6–7]), it is far more efficient to try to find aggregate (or pre-filtered or pre-appraised) evidence first.

Haynes ‘‘5S’’ levels of evidence (adapted by [1])

There are several forms of aggregate evidence, often represented as the higher layers of an evidence pyramid (because they aggregate individual studies, represented by the lowest layer). There are confusingly many pyramids, however [8] with different kinds of hierarchies and based on different principles.

According to the “5S” paradigm[9] (now evolved to 6S -[10]) the peak of the pyramid are the ideal but not yet realized computer decision support systems, that link the individual patient characteristics to the current best evidence. According to the 5S model the next best source are Evidence Based Textbooks.

(Note: EBM and textbooks almost seem a contradiction in terms to me, personally I would not put many of the POCs somewhere at the top. Also see my post: How Evidence Based is UpToDate really?)

Whatever their exact place in the EBM-pyramid, these POCs are helpful to many clinicians. There are many different POCs (see The HLWIKI Canada for a comprehensive overview [11]) with a wide range of costs, varying from free with ads (e-Medicine) to very expensive site licenses (UpToDate). Because of the costs, hospital libraries have to choose among them.

Choices are often based on user preferences and satisfaction and balanced against costs, scope of coverage etc. Choices are often subjective and people tend to stick to the databases they know.

Initial literature about POCs concentrated on user preferences and satisfaction. A New Zealand study [3] among 84 GPs showed no significant difference in preference for, or usage levels of DynaMed, MD Consult (including FirstConsult) and UpToDate. The proportion of questions adequately answered by POCs differed per study (see introduction of [4] for an overview) varying from 20% to 70%.

McKibbon and Fridsma ([5] cited in [4]) found that the information resources chosen by primary care physicians were seldom helpful in providing the correct answers, leading them to conclude that:

“…the evidence base of the resources must be strong and current…We need to evaluate them well to determine how best to harness the resources to support good clinical decision making.”

Recent studies have tried to objectively compare online point-of-care summaries with respect to their breadth, content development, editorial policy, the speed of updating and the type of evidence cited. I will discuss 3 of these recent papers, but will review each paper separately. (My posts tend to be pretty long and in-depth. So in an effort to keep them readable I try to cut down where possible.)

Two of the three papers are published by Rita Banzi and colleagues from the Italian Cochrane Centre.

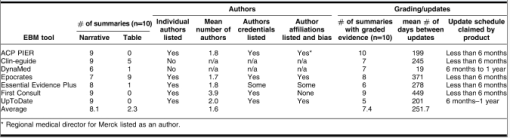

In the first paper, reviewed here, Banzi et al [1] first identified English Web-based POCs using Medline, Google, librarian association websites, and information conference proceedings from January to December 2008. In order to be eligible, a product had to be an online-delivered summary that is regularly updated, claims to provide evidence-based information and is to be used at the bedside.

They found 30 eligible POCs, of which the following 18 databases met the criteria: 5-Minute Clinical Consult, ACP-Pier, BestBETs, CKS (NHS), Clinical Evidence, DynaMed, eMedicine, eTG complete, EBM Guidelines, First Consult, GP Notebook, Harrison’s Practice, Health Gate, Map Of Medicine, Micromedex, Pepid, UpToDate, ZynxEvidence.

They assessed and ranked these 18 point-of-care products according to: (1) coverage (volume) of medical conditions, (2) editorial quality, and (3) evidence-based methodology. (For operational definitions see appendix 1)

From a quantitive perspective DynaMed, eMedicine, and First Consult were the most comprehensive (88%) and eTG complete the least (45%).

The best editorial quality of EBP was delivered by Clinical Evidence (15), UpToDate (15), eMedicine (13), Dynamed (11) and eTG complete (10). (Scores are shown in brackets)

Finally, BestBETs, Clinical Evidence, EBM Guidelines and UpToDate obtained the maximal score (15 points each) for best evidence-based methodology, followed by DynaMed and Map Of Medicine (12 points each).

As expected eMedicine, eTG complete, First Consult, GP Notebook and Harrison’s Practice had a very low EBM score (1 point each). Personally I would not have even considered these online sources as “evidence based”.

The calculations seem very “exact”, but assumptions upon which these figures are based are open to question in my view. Furthermore all items have the same weight. Isn’t the evidence-based methodology far more important than “comprehensiveness” and editorial quality?

Certainly because “volume” is “just” estimated by analyzing to which extent 4 random chapters of the ICD-10 classification are covered by the POCs. Some sources, like Clinical Evidence and BestBets (scoring low for this item) don’t aim to be comprehensive but only “answer” a limited number of questions: they are not textbooks.

Editorial quality is determined by scoring of the specific indicators of transparency: authorship, peer reviewing procedure, updating, disclosure of authors’ conflicts of interest, and commercial support of content development.

For the EB methodology, Banzi et al scored the following indicators:

- Is a systematic literature search or surveillance the basis of content development?

- Is the critical appraisal method fully described?

- Are systematic reviews preferred over other types of publication?

- Is there a system for grading the quality of evidence?

- When expert opinion is included is it easily recognizable over studies’ data and results ?

The score for each of these indicators is 3 for “yes”, 1 for “unclear”, and 0 for “no” ( if judged “not adequate” or “not reported.”)

This leaves little room for qualitative differences and mainly relies upon adequate reporting. As discussed earlier in a post where I questioned the evidence-based-ness of UpToDate, there is a difference between tailored searches and checking a limited list of sources (indicator 1.). It also matters whether the search is mentioned or not (transparency), whether it is qualitatively ok and whether it is extensive or not. For lists, it matters how many sources are “surveyed”. It also matters whether one or both methods are used… These important differences are not reflected by the scores.

Furthermore some points may be more important than others. Personally I find step 1 the most important. For what good is appraising and grading if it isn’t applied to the most relevant evidence? It is “easy” to do a grading or to copy it from other sources (yes, I wouldn’t be surprised if some POCs are doing this).

On the other hand, a zero for one indicator can have too much weight on the score.

Dynamed got 12 instead of the maximum 15 points, because their editorial policy page didn’t explicitly describe their absolute prioritization of systematic reviews although they really adhere to that in practice (see comment by editor-in-chief Brian Alper [2]). Had Dynamed received the deserved 15 points for this indicator, they would have had the highest score overall.

The authors further conclude that none of the dimensions turned out to be significantly associated with the other dimensions. For example, BestBETs scored among the worst on volume (comprehensiveness), with an intermediate score for editorial quality, and the highest score for evidence-based methodology. Overall, DynaMed, EBM Guidelines, and UpToDate scored in the top quartile for 2 out of 3 variables and in the 2nd quartile for the 3rd of these variables. (but as explained above Dynamed really scored in the top quartile for all 3 variables)

On basis of their findings Banzi et al conclude that only a few POCs satisfied the criteria, with none excelling in all.

The finding that Pepid, eMedicine, eTG complete, First Consult, GP Notebook, Harrison’s Practice and 5-Minute Clinical Consult only obtained 1 or 2 of the maximum 15 points for EBM methodology confirms my “intuitive grasp” that these sources really don’t deserve the label “evidence based”. Perhaps we should make a more strict distinction between “point of care” databases as a point where patients and practitioners interact, particularly referring to the context of the provider-patient dyad (definition by Banzi et al) and truly evidence based summaries. Only few of the tested databases would fit the latter definition.

In summary, Banzi et al reviewed 18 Online Evidence-based Practice Point-of-Care Information Summary Providers. They comprehensively evaluated and summarized these resources with respect to coverage (volume) of medical conditions, editorial quality, and (3) evidence-based methodology.

Limitations of the study, also according to the authors, were the lack of a clear definition of these products, arbitrariness of the scoring system and emphasis on the quality of reporting. Furthermore the study didn’t really assess the products qualitatively (i.e. with respect to performance). Nor did it take into account that products might have a different aim. Clinical Evidence only summarizes evidence on the effectiveness of treatments of a limited number of diseases, for instance. Therefore it scores bad on volume while excelling on the other items.

Nevertheless it is helpful that POCs are objectively compared and it may help as starting point for decisions about acquisition.

References (not in chronological order)

- Banzi, R., Liberati, A., Moschetti, I., Tagliabue, L., & Moja, L. (2010). A Review of Online Evidence-based Practice Point-of-Care Information Summary Providers Journal of Medical Internet Research, 12 (3) DOI: 10.2196/jmir.1288

- Alper, B. (2010). Review of Online Evidence-based Practice Point-of-Care Information Summary Providers: Response by the Publisher of DynaMed Journal of Medical Internet Research, 12 (3) DOI: 10.2196/jmir.1622

- Goodyear-Smith F, Kerse N, Warren J, & Arroll B (2008). Evaluation of e-textbooks. DynaMed, MD Consult and UpToDate. Australian family physician, 37 (10), 878-82 PMID: 19002313

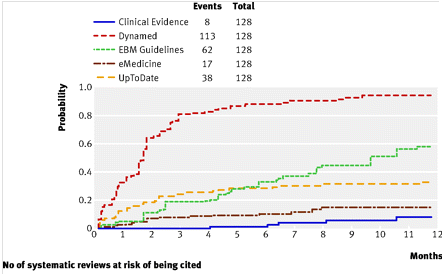

- Ketchum, A., Saleh, A., & Jeong, K. (2011). Type of Evidence Behind Point-of-Care Clinical Information Products: A Bibliometric Analysis Journal of Medical Internet Research, 13 (1) DOI: 10.2196/jmir.1539

- McKibbon, K., & Fridsma, D. (2006). Effectiveness of Clinician-selected Electronic Information Resources for Answering Primary Care Physicians’ Information Needs Journal of the American Medical Informatics Association, 13 (6), 653-659 DOI: 10.1197/jamia.M2087

- How will we ever keep up with 75 Trials and 11 Systematic Reviews a Day? (laikaspoetnik.wordpress.com)

- 10 + 1 PubMed Tips for Residents (and their Instructors) (laikaspoetnik.wordpress.com)

- Time to weed the (EBM-)pyramids?! (laikaspoetnik.wordpress.com)

- Haynes RB. Of studies, syntheses, synopses, summaries, and systems: the “5S” evolution of information services for evidence-based healthcare decisions. Evid Based Med 2006 Dec;11(6):162-164. [PubMed]

- DiCenso A, Bayley L, Haynes RB. ACP Journal Club. Editorial: Accessing preappraised evidence: fine-tuning the 5S model into a 6S model. Ann Intern Med. 2009 Sep 15;151(6):JC3-2, JC3-3. PubMed PMID: 19755349 [free full text].

- How Evidence Based is UpToDate really? (laikaspoetnik.wordpress.com)

- Point_of_care_decision-making_tools_-_Overview (hlwiki.slais.ubc.ca)

- UpToDate or Dynamed? (Shamsha Damani at laikaspoetnik.wordpress.com)

Related articles (automatically generated)

Recent Comments